Today, GreatHorn announced innovative new functionality that helps us identify malicious Office 365 and G Suite credential theft phishing attacks. This blog talks about the research and technology that powers this innovative functionality.

The Challenge of Spotting Credential Theft

Victims of poor cybersecurity measures often deal with issues that are primarily technological in nature: brute-force attacks on weak passwords, poorly chosen public-key encryption parameters, etc. However, a purely technological solution to issues such as spear phishing are doomed to fail. It doesn’t matter much how excellent your encryption algorithm if you—or someone in your organization—decides to click on a link that takes you to a website that looks “oh so real.”

With software as a service applications and cloud-based document sharing becoming the de facto norm, email accounts are now the entry point into a wealth of confidential information. No wonder credential theft attacks are on the rise. But even though the potential risks of such an attack are immense, can you really blame your employees for trusting a website that looks so realistic that even cybersecurity professionals would share sensitive information?

A Proactive Approach: Classifying Links on their Intent

A key problem is that spear phishing attacks prey on psychological weaknesses, such as an eagerness to trust. The solution to a problem like this is to provide email users and security administrators with tools to quickly understand the characteristics of links and credential requests. This approach is robust because, even if attackers change their tactics over time, this goal—of informing a user of malicious intent and recommending safe courses of action—will not change. We can carry out such a strategy if we have a lot of data and experience to fuel our recommendations.

It’s crucial to have a data platform that can respond appropriately to new threats. The typical approach to protecting against dangerous credential theft has been to use threat intelligence, that is to create an extensive databases of threats based on what has been identified either in labs or “in the wild”. When a new attack variant or type is detected, the databases are updated, but this reactive approach doesn’t undo the damage already caused by the phish – and is particularly ineffective with highly targeted spear phishing attacks that don’t rely on volumetric spamming to get results. What’s required is a proactive approach that can examine a potentially malicious link, determine its risk, and take steps to protect the user.

An optimal solution for this widespread problem, then, would have the following characteristics:

- Proactive: Anticipate and prevent new threats instead of merely reacting to them

- Self-learning: Educate without the need for excessive human intervention

- Pragmatic: Focus on high-value threats and how to protect against the undesired outcome – i.e. what matters is if a user looks at a page and decides to click it. We thus need a solution that uses computer vision techniques to really protect users and advise them on the best decision.

Ultimately, we want to provide a platform for our users that can pre-analyze potential credential theft sites – before a user interacts with it – and combine that analysis with other data about the link, to determine its risk, protect users, and warn administrators about malicious links without administrators manually needing to independently validate each link.

Enter a Convolutional Neural Network

A complete guide to these artificial intelligence mainstays is far beyond the scope of a single blog post, but we can still provide a good understanding of how they work and one way they can be applied to our spear phishing protection.

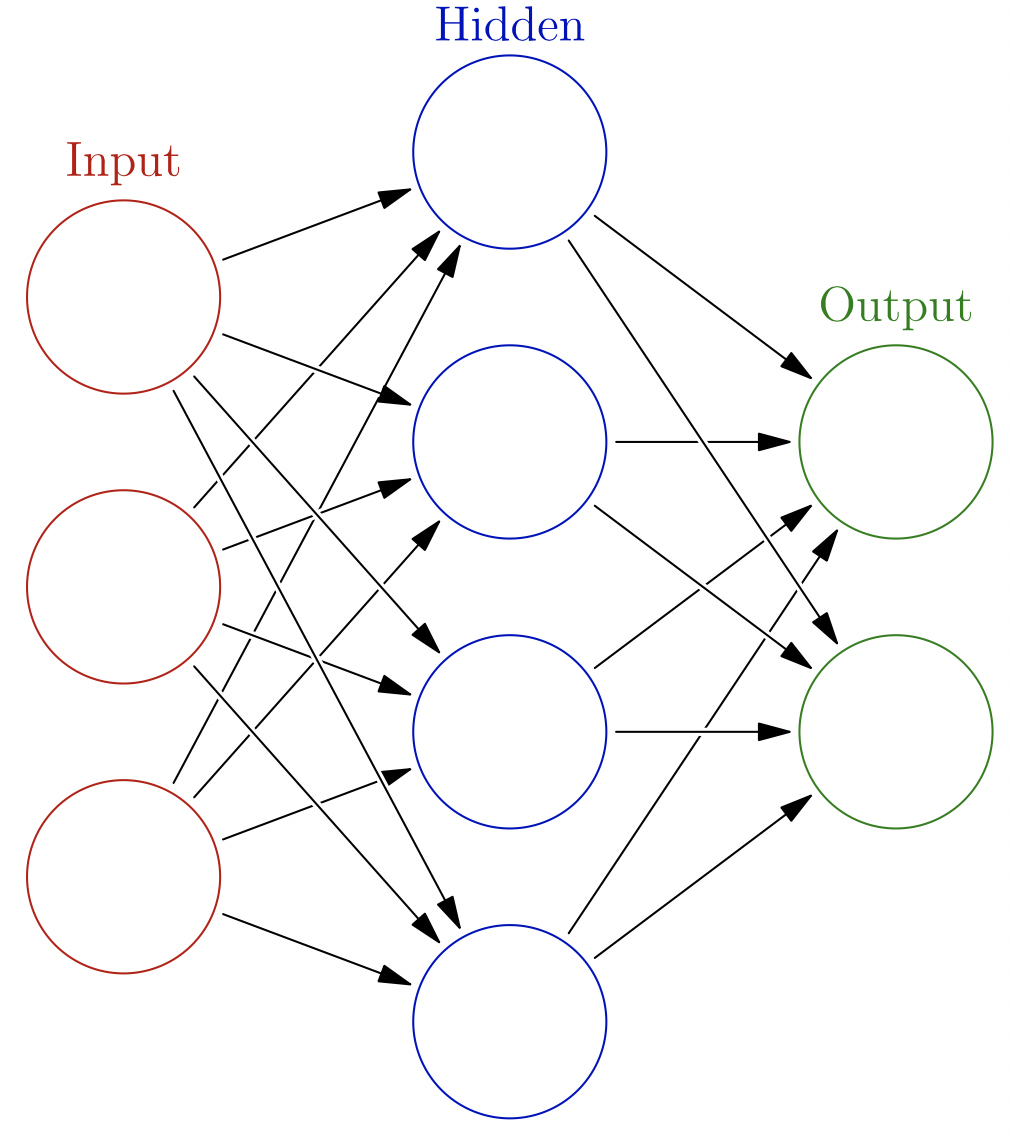

Figure 1: Neurons and how they interact Source: Glosser.ca licensed under Creative Commons

Perhaps neural networks make you think of neurons, a type of cell characterized by 1) responding to electrical stimulation and 2) being connected to other neurons. [[See figure 1.]] A neuron can have several inputs and several outputs. The strengths of the connections between neurons are called weights. Just like biological neurons, artificial neurons have an activation or threshold, above which the neuron will fire, affecting the neurons connected to its outputs. The result of all these firing neurons is an adjustment of the weights connecting these neurons. The neurons in neural networks originally were inspired by the ones in human brains, in particular how human brains can learn at all. However, nowadays neurons in an AI context are not connected to their biological origins. In fact, you can think of a single neuron as being a classifier.

If you connect many neurons together, with the outputs of one layer of neurons becoming the inputs to the next layer of neurons, we have a neural network. A network can be many layers deep. In fact, the popular field of deep learning deals with training these many-layered neural networks. The most common way to train a neural network is by providing it with correctly labeled examples. Imagine a neural network that’s trying to recognize handwritten numerals. You provide the network with many, many samples of handwritten numerals as well as the numeral each sample is meant to represent, and the learning algorithm will adjust the weights of the neurons in such a way that makes the network’s prediction error as small as possible. This method of training a network is called supervised learning as opposed to unsupervised learning, where samples without correct labels are provided.

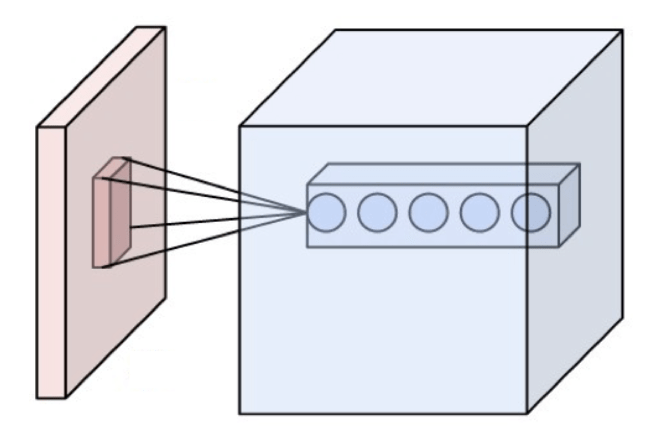

Figure 2: A Convolution Source: Wikipedia licensed under Creative Commons

What I wanted to analyze image-based data? It turns out that a very successful strategy is to take an item of interest and decompose it into its salient features. For example, to recognize an image of a human face, you could first take a filter that looks for eyes, one that looks for noses, one that looks for mouths, etc. and look for images that activate all of these filters at once. This approach was inspired by how a cat’s brain visually processes objects in its field of view. A convolution is roughly the process of taking an image and applying a filter to it. [[See figure 2]] If the resulting output is large enough, the network could conclude that the image contains the visual element represented by the filter. A network that can train its weights with convolutions is called a convolutional neural network (CNN).

Such networks tend to learn hierarchically, meaning that in earlier layers of a deep network, first simple curves are learned, which are combined into higher level features like eyes and ears, which are combined into faces, which are combined into crowds, etc. A particular strength of CNNs is that they can recognize features anywhere in an image, which makes object detection and classification feasible.

It’s been mentioned that CNNs have filters that can pick out salient features of objects, and you might ask how where these filters come from. The CNN can create these filters itself, so long as you give it representative training examples! The filters may not be how a human (or any living being) would analyze an image, but the surprising result is that CNNs can match or even surpass human beings in some classification tasks in some domains.

Because GreatHorn already has a substantial dataset, including screenshots of links that our users have yet to click on, we have begun using CNNs to pre-classify the links by their purpose (e.g., “Is a site requiring credentials”, “Is a Microsoft login page”, etc.).

We are taking advantage of our large data set to enhance our threat detection platform with computer vision techniques. We can automatically inform users about potentially dangerous websites, websites that “look so real” even though they point to an attacker’s website. Furthermore, we have built up a corpus of bona fide websites (i.e., official Microsoft Office 365 login pages) and can identify whether or not someone is trying to specifically impersonate a Microsoft login page. These techniques allow us to supplement and enhance our link protection and policy features.

Tune in next time to see a start-to-finish example of image classification with code.